Resources Roundup: She didn't just say what I think she did, did she?

A selection of unpopular, unexpected, or unusual takes on data science, analytics and machine learning.

Welcome back to another resources roundup, and this time, I’m feeling a little sassy. It’s been too long since anyone told me I had the sexiest job of the century, and high time to bring that sexy back, courtesy of a little controversy. So this post is all about spicy takes on data science 🌶️🌶️🌶️.

Blog post: For data scientists, analysts, and the people who know and love them.

Let’s start with the most titillating topic of all. That’s right… I’m talking about…

Data cleaning.

Ok, so I’m clearly kidding; data cleaning doesn’t exactly get a lot of attention, especially compared to AI, machine learning, or even just data analysis. But why is that? Hardly seems fair, considering we all know how important data collection and processing is. We can all repeat the adage, “garbage in, garbage out.” In this post,

calls BS on the idea that data cleaning isn’t real data science work. He points out that cleaning data imposes judgments on it, and that, my friends, is analysis. It also allows you to understand and reason about your data, and build more accurate, less opaque and potentially biased models on top of it. Thus, Randy’s post suggests a much sexier new name for this crucial task. 😉Blog post: For anyone who cares about state-of-the-art models and calibration.

Following from data cleaning, the next step* in a machine learning workflow is typically to prepare the data for modelling. Now, let’s say you have a fraud detection dataset where only 0.5% of the input rows actually represent cases of fraud. It’s a classic imbalanced dataset problem, so what do you do? Upsample the minority class, and, perhaps, downsample the majority one?

That’s exactly what

thought you would say, and he’s, frankly, very disappointed. Check out Don’t “fix” your imbalanced data to find out why.

Update: After Christoph got some push back on this advice, he doubled down on the recommendation, and provided some solid alternative strategies for dealing with imbalanced data.

*By the way, I’m aware that this post has skipped the initial stage of gathering data. I just didn’t have any hot takes on that topic at this time. I appreciate your understanding 🙏.

Research paper: For anyone who hates elbows.

Alright, let’s change the scenario and say you want to perform K-Means classification to discover clusters within your data. How do you determine the right number for k? The elbow method, you say?

Oh dear. Have you never read Eric Schubert’s aptly named paper, “Stop using the elbow criterion for k-means: and how to choose the number of clusters instead”? Well, now you can. With takeaways like this:

Given the prevalence of the elbow method in education, online media (such as Wikipedia), and even clustering research … it appears to be due to warn of using this method and to emphasize that much better alternatives such as the variance-ratio criterion, … the Bayesian Information Criterion, or the Gap statistics should always be preferred instead.

… you get to feel silly and enlightened, all at the same time.

Mini-tutorial: For the statisticians, and those who are intimidated by them.

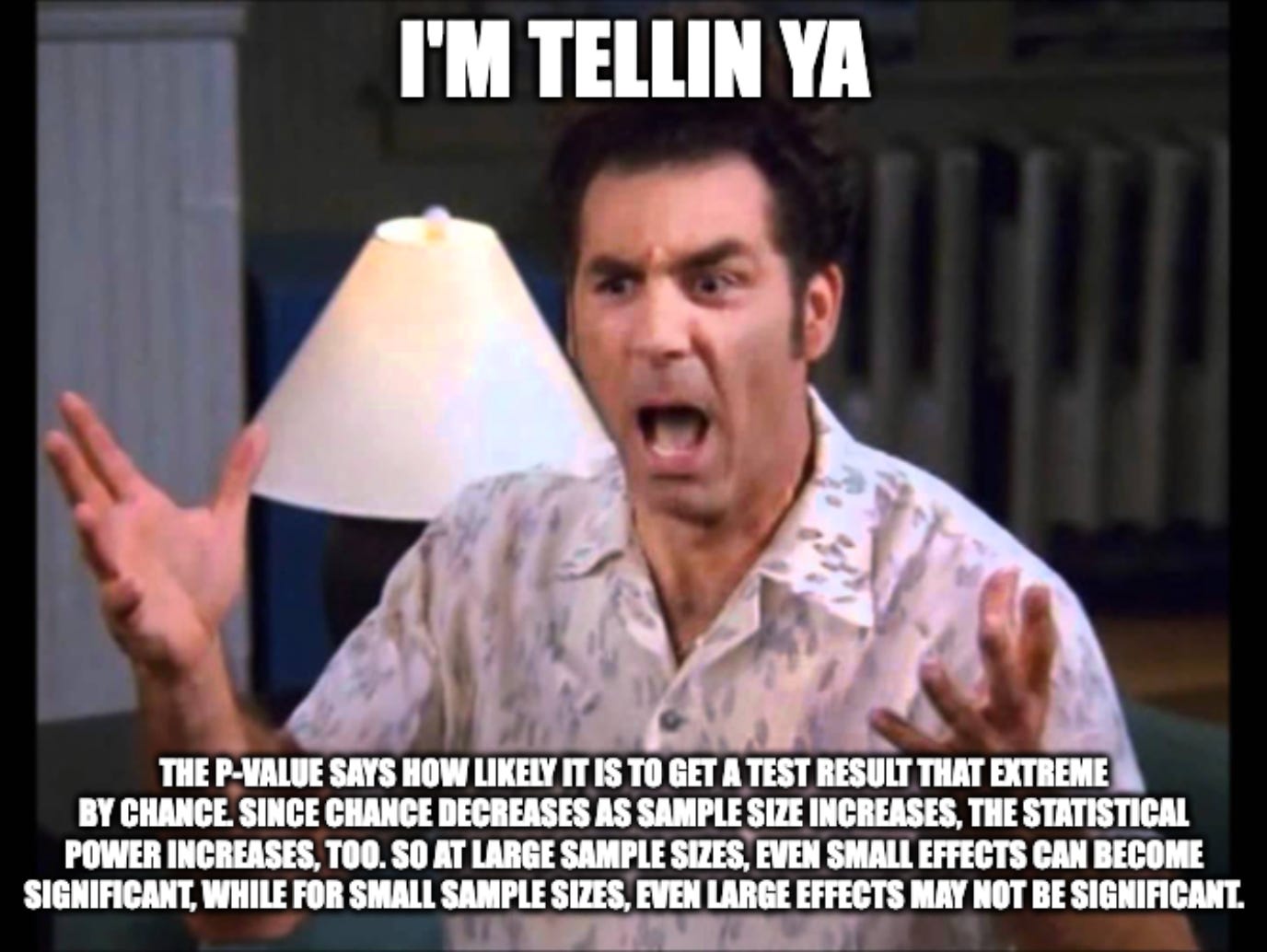

Ok, now let’s imagine you used your model to take some kind of business action. And let’s say you were very well behaved and took this action within an AB test, so you could check whether it was a good idea, or not. How will you know whether your results are significant? Any guesses? Or are you now reluctant to answer? 😉

As you might have guessed, I’m here to rain all over your p-value parade with this mini-tutorial, Effect Sizes: Why Significance Alone is Not Enough. It’s full of R code that I know you’re going to skip anyway, but the takeaways are clear and include a nice presentation of Cramer’s V, to help you interpret the relationship between sample and effect size.

An essay: For data professionals experiencing an existential crisis.

So what happens if your AB test results, or your machine learning performance metrics, or the results of your exploratory data analysis, are underwhelming? What if they’re not what the company had expected, or perhaps, hoped?

Firstly, let me make it absolutely, categorically clear, that you should never attempt to lie, mislead, cover up or otherwise deceive people with data. Our skills are a great power, and we have to use them responsibly.

That’s what makes

‘s essay, The case for being biased, so provocative. Read it for yourself and see what you get from it. For me, it’s that the power of data ultimately lies not just in objective truths but in its ability to emotionally connect and rally teams around a shared mission. I see this as a positive takeaway, which I can consciously try to apply in my own data science work.A rant. But I promise, a productive one: For anyone who hasn’t heard enough of me yet.

Alright, so let’s say you’ve gotten through the entire process of building a machine learning model, and it’s working well. Now what are you going to do with it? How are you going to action the outputs?

Of course, you should have gotten clarity on that at the very beginning, with the help of product owners, business leaders, and end users. But unfortunately, this doesn’t always happen. That’s probably why, when I kicked off my team’s research into predicting Customer Lifetime Value, I noticed plenty of tutorials on building a model, but very little was said about real, value-driving use cases for CLV prediction. I was fortunate that our company had a plan, but this didn’t stop me getting so annoyed about the lack of information that I ended up writing a multi-part Medium odyssey about it 🤦♀️. In part one, I show that even analysing historical CLV data can produce a tonne of actionable insights (despite not using any ML and thus, not being sexy at all, right?). In part two, I talk about what you can actually do with a CLV prediction model. Parts three and four are coming soon, and will focus on practical lessons on conducting CLV research with real world customers and their not-quite-kaggle-standard data.

A bit of fun: For anyone who needs it, after reading this far…

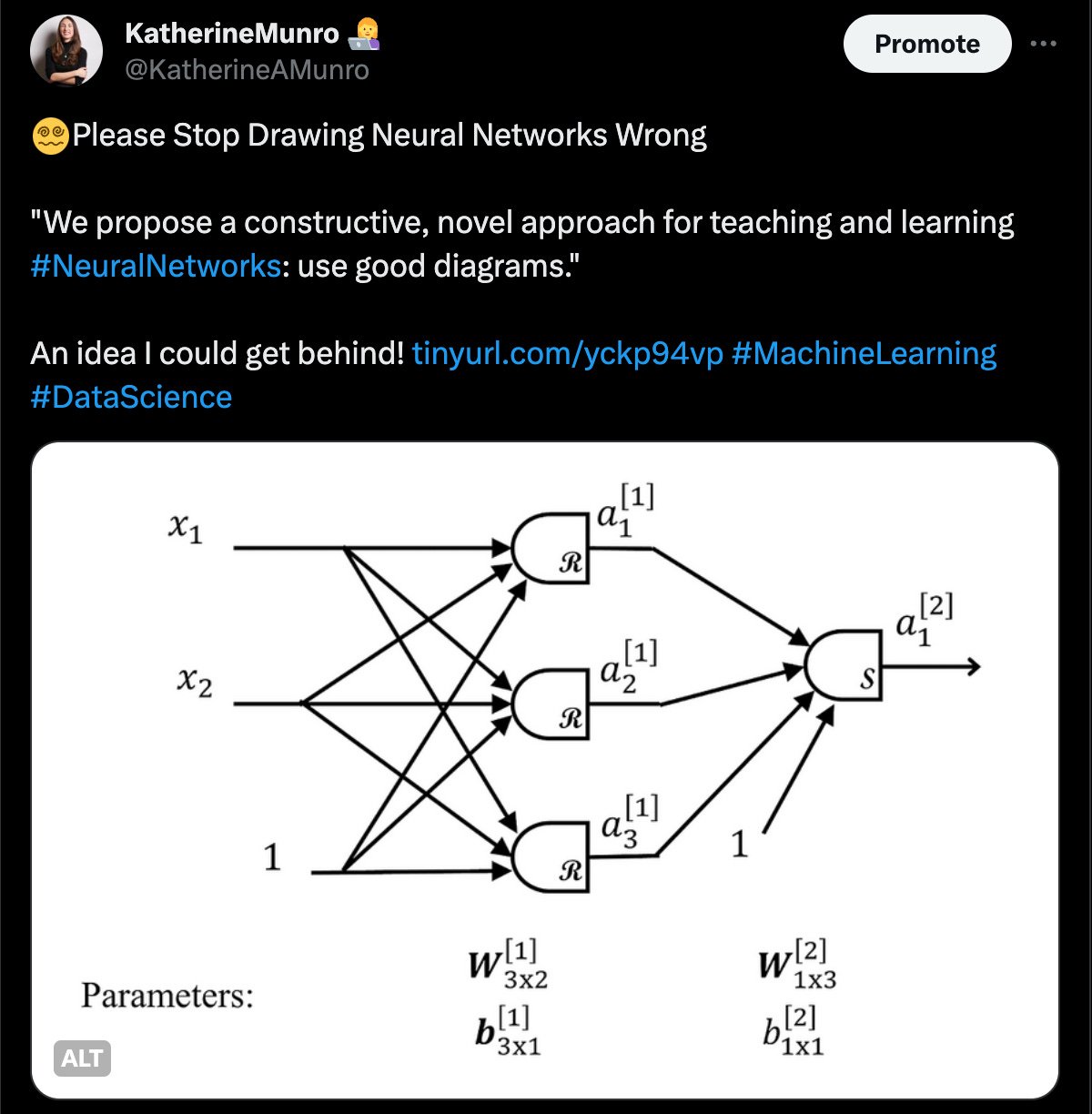

Changing the topic to wrap up this resources roundup, think you can draw a neural network? Aaron Master and Doron Bergman think not. And they might have a point 😵💫.