Resources Roundup: How to get your Machine Learning project to production

This resources roundup from my Twitter feed covers the stages of bringing a Machine Learning project from idea into production. There are links in here for data scientists, ML Ops experts, and anyone working in data driven companies. I've even done a little deep dive in the model development section. Let me know what you think!

Before you start, know what the heck you’re doing:

At the start of an ML project, a couple of things are essential to get clear. What is the business problem, exactly? What kind of ML problem does this translate to? How will your model's predictions be used, and what value will they generate? Such discussions can be led by data scientists in conjunction with product and leadership teams, to ensure that everyone is on the same page. Then there are practical issues. How will you collect the data? Which features might be useful? And how will your model be deployed and monitored?

All these questions, and more, are covered in this easy-to-brainstorm template. Even experienced practitioners can use it, for streamlining processes and onboarding juniors.

Although the template can help set your ML project up for success, you can always miss something. It's very difficult from the starting position to predict which hurdles might arise, let alone pre-plan how to tackle them.

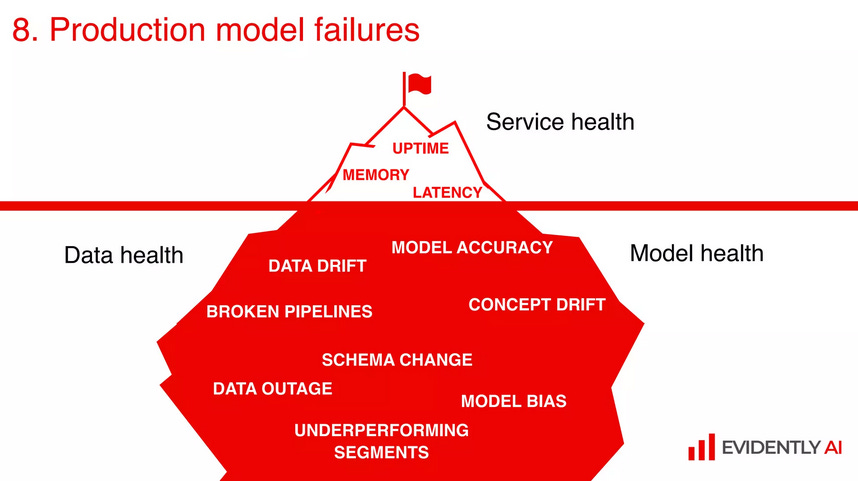

So before starting to answer those questions, I recommend you check out 'How your Machine Learning Project will Fail' (video and slides) from Elena Samuylova of EvidentlyAI. Full of wise and practical advice, including what is a good versus bad failure, and how to avoid the latter.

Deep dive: Build a model. Try to understand it:

One reason ML models might fail to make it to production is if they are not interpretable. There are many problems with not knowing why a model made a prediction that it did. The most obvious issues arise when predictions can impact human life, such as in recruiting, criminal recidivism prediction or creditworthiness approval. I'm sure you've heard these examples; In fact, I touched on similar issues of AI ethics before:

But there are other, practical reasons for ML practitioners to desire interpretable models. For a start, they can reveal issues with data preprocessing, if it turns out that the model is relying on features which don't make intuitive sense. Similarly, problems with deployed models—like data drift or overfitting—can be detected by routinely examining feature importances, for example. Finally, interpretable models can help reveal new patterns in data, which lead to new domain knowledge.

Let me highlight that last point with an example. Consider a decision tree: a type of algorithm which learns sets of decision rules from data, which it then uses to make its predictions. Humans can often interpret these rules and make them actionable, too. Say, for instance, that you want to predict customer churn. You might suspect that if a customer has made many purchases with you before, but not for a while now, then they are a churn risk or have churned already. But how many purchases, combined with how long ago, make a customer a churn risk? A decision tree can learn thresholds to help answer this question, thus helping the company better understand its business and customers.

As data scientists, we know that interpretable models are important. That's why we often employ 'interpretable' algorithms, like decision trees and linear regression.

But such algorithms can still learn non-interpretable features, which result in impossible-to-understand explanations. That's according to MIT and their Data to AI Lab. They highlight The Need for Interpretable Features, and propose a new feature taxonomy for domain experts. Check out this excellent summary, or the original paper.

Choose which model to use, and don’t just go for accuracy:

Having developed one or more ML models, it's time to evaluate them. Importantly, the goal is not necessarily to choose the model with the highest R2 and be done with it. Unlike the fixed data sets with which I hope you compared your models (after all, how else can you compare them fairly if some work with apples and the other, oranges?), the real world in which you'll deploy your model is dynamic and unpredictable. That makes it important to go past standard machine learning metrics.

At the risk of sounding like an EvidentlyAI fangirl (which, I’d better just face it, I am), here's another one of their fantastic tutorials. It's all about evaluating ML models, with a jupyter notebook containing the code, and some visualisations which excite me more than I care to admit.

Set that thing loose! But also, keep it under control 😅:

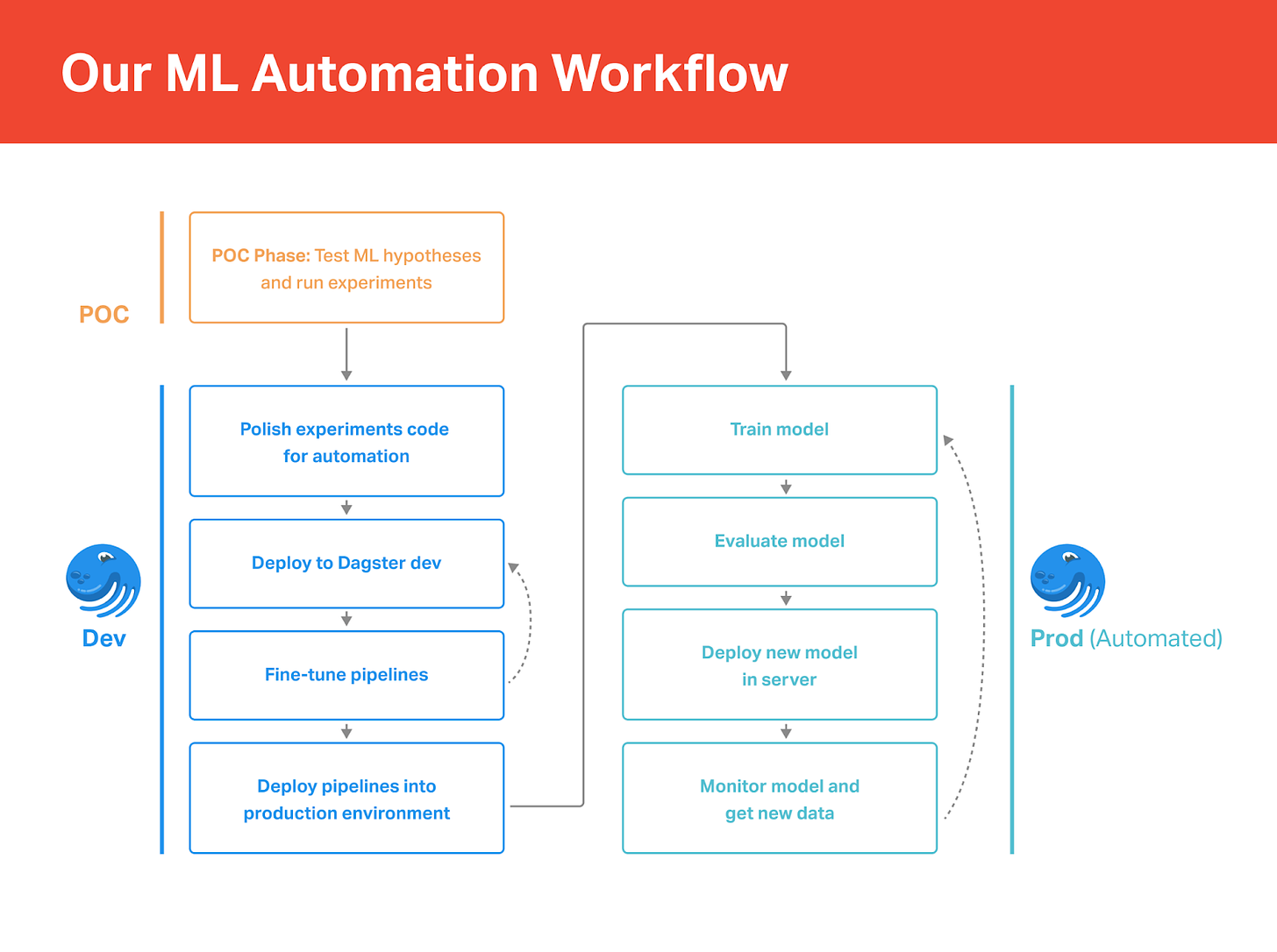

Of course, we don't usually just train a model, launch it to production, and forget about it. Most of the time, we have to regularly retrain the model, on an ever-updating stream of new data, and then deploy the new version. Doing this via automated pipelines can save time, and avoid deployment errors. It also allows you to train models more often, so they are better at capturing changing patterns in the input data.

Dagster is one very useful tool for performing such ML Ops tasks. In this tutorial, the data consultancy firm Thinking Machines demonstrates Developing a Continuous Training Pipeline with Dagster. Check it out, not just for the Dagster initiation but for getting a feeling for a real world ML Ops problem and just one possible way to solve it.

But a continuous training pipeline is only one thing to think about when productionising an ML model. So my final resource today is a fantastic article from the Google research team, highlighting the risk of building up technical debt in an ML system.

This article identifies several design anti-patterns, including boundary erosion, entanglement, undeclared consumers and hidden feedback loops, and how your team could tackle them. Check it out: MachineLearning: The High-Interest Credit Card of Technical Debt.

Thanks for reading my second ever Substack resources roundup. I’m very much experimenting with the format, so I’d love your feedback. If you liked it, please share, recommend, or follow me. If I could do better, or you preferred my first attempt, leave me a comment below!

You can also follow me on Twitter, where I share these kind of resources more regularly, but without the golden thread tying them all together. To each their own!

Want to learn more about bringing ML projects to production? You need my textbook, The Handbook of Data Science and AI! Whether you’re an aspiring ML practitioner or a data leader, there are chapters for you: from ‘Infrastructure’ and ‘Data Architecture’, to ‘Data Engineering’ and ‘Data Management’; from ‘Machine Learning’ and ‘Natural Language Processing’, to ‘Data Driven Enterprises’ and ‘How to Build Great AI’; and many more.