Explaining AI — to comedians?

Artificial Intelligence: it’s a topic surrounded by hype, hope, pessimism, and a healthy dose of confusion. Many people have many…

Artificial Intelligence: it’s a topic surrounded by hype, hope, pessimism, and a healthy dose of confusion. Many people have many questions, as I have learned through discussions within my own personal circle. That’s why I was thrilled to be invited onto one of my favourite podcasts to answer questions on AI with non-experts: and not just any non-experts, but a panel of comedians.

As you may imagine, with a room full of jokers, the episodes often turn into a hectic, hilarious excursion into the expert topic of the day. That was certainly the case for me, and it was a tonne of fun. Nevertheless, for those listeners who are unfamiliar with AI and are curious about the full answers I would have given — and some questions we didn’t get around to asking — this post is for you. Similarly, if you’ve stumbled across this post organically, having perhaps finally Googled a question about AI you were always afraid to ask, then this post should give you a robust, high-level introduction to this fascinating topic. You can also check out the podcast itself, on YouTube or by searching for @IDKATPODCAST on your favourite podcast platform.

What is Artificial Intelligence?

Honestly, AI is often hard to define: a great deal about it is written by non-practitioners, even non-users, of the technology, and even those working in the field often disagree with each other. One of the reasons for this is AI has a shifting definition: what was considered “AI” in the past may not be anymore. For example, an early definition would sound something like “AI is the process of automating tasks which would normally require human thought.” Thus, in the early, “symbolic AI” days, researchers wrote simple, rule-based systems containing logic like “if X happens then the outcome is probably Y”, and these would have been considered artificially intelligent “expert-systems.” But the field has advanced so much since then, that one would struggle to convince anyone that such a system is AI.

Nowadays, particularly given the parallels between Artificial Neural Networks (a cornerstone of current AI work, which we’ll get to later) and animal brains, we are more likely to describe AI as something like “simulating or mimicking natural intelligence (e.g. from animals or humans) within machines, especially within computer programs.”

My own personal definition is somehow a mixture of both: “Giving machines, and especially computer programs, the ability to learn and complete tasks which would normally require some kind of thought.”

What are some types of AI you interact with every day?

This is just a list I brainstormed when I imagined the life of a Hollywood comedian; if your use cases differ, please feel free to leave a comment, I’d love to hear it!

Making requests to your smartphone or home speaker

Searching the internet (the browser makes query suggestions as you type, and uses tools such as a knowledge graph to serve results to you)

Consuming media (platforms like Netflix and YouTube recommend content to you, while also automatically detecting and blocking nudity, hate speech, and so on)

Online shopping (while you are getting product recommendations given to you, the web shop may also be gathering data to predict e.g. your customer lifetime value)

Driving in a self-driving car

Composing a message or email (Swype text, next word prediction and spell check)

Checking your email (spam filtering and automatic categorisation)

Unlocking your phone (facial recognition) and taking a photo (object detection is used to know where to focus)

Banking (fraud detection is looking out for you)

What is an algorithm?

An algorithm is a process, written in code, telling a computer how to complete a task. For example, in machine learning we cannot tell the machine how to learn. However, the algorithm tells the computer how to process the data such that it learns for itself how to produce the desired outputs.

How do machines learn?

The simplest methods for machine learning are based on statistics. For example in spam detection, you’re just counting how many emails have words like “winner”, “credit”, “card” and “hurry”, in them, and how many of those emails are spam. Then you do that for every word in every email in your training set, and you can use those probabilities to classify new emails.

More recent techniques are basically based on trial and error and seeing the answers. It’s all about pattern recognition. You start with a model whose parameters are all just random. It’s essentially a guessing machine. You give the model a bunch of data points, and it tries to produce a desired output. Then you show it the answer, and how wrong it was. Based on the size and direction of this error (for example, if the task was to output a number, was the model’s output too high or too low?), the model changes all its parameters and tries again. It keeps doing this until it starts to discover a pattern in the data which we humans may not have observed, as it was all too much and too complex for us to juggle in our brains at once.

Once this process is complete, then when you give your model a new data point, but don’t provide the answer, it will have a pretty good chance of providing the right output. Of course, the new data point should somehow resemble the other points the model saw before; you cannot train a model to distinguish between photos of pizza and of spaghetti, and then expect it to do well on a picture of a salad.

What is a neural network and when were they invented?

Consider a biological brain, such as ours: Normally when we encounter a stimulus, our neurons get excited to a greater or lesser degree, and they pass this electrical signal onto other neurons.

An Artificial Neural Network is loosely inspired by this process. It’s a network of connected nodes, called “artificial neurons.” If you want to visualise it in a very simplistic manner, just think of a series of stacks of circles. Imagine all the circles in the first stack have a line connecting them to all the circles in the second stack. And the second stack is connected in the same way, to the next stack, and so on, for each stack of circles. Each stack is called a “layer”. So, for example, if you imagined three stacks of circles, this would be a three-layer neural network. The image below demonstrates this.

The first layer of artificial neurons receive an input signal, in the form of numbers. Those numbers could represent anything, even music or images: it’s just a matter of encoding it. The neurons manipulate the input information and pass it on, via the connections, to the next layer (and the next layer, and so on, if there are a lot of layers). The final layer produces an output. Then we measure how wrong the output was, and we use that information to update the strength of the connections between the neurons. Basically, we’re changing how the input signal gets processed by the whole network. And then we try again. And again and again. Once this is done enough times, we end up with a model that’s pretty good at producing the output we want for a given input.

Since neural networks sound so complex, and since they are responsible for the astonishing developments in the AI world in recent years, you would be forgiven for thinking they are a very new idea. In fact, they have been around (at least conceptually) since as early as the 1940s. The perceptron, for example, was an early pattern recognition machine proposed in 1958. The reason behind the recent buzz is that it was only in the last decades that we acquired enough data and computational power to really let neural networks shine.

What is deep learning?

If you skipped to this question, please read the definition of neural networks, above, before you read on.

Deep learning is, put simply, stacking multiple neural network layers on top of each other. Deep neural networks are able to learn more complex functions between input and output than other models can. Consider the case of face detection model (an image recognition task), which is often performed with a kind of deep neural network called a “Convolutional Neural Network.” The lower layers of the network will learn to recognise simple things in the input, like lines. This is important because a line could, for example, indicate a feature of interest, such as the side of a nose. Then intermediate layers will learn to combine the line representations into simple shapes, like the two sides of the nose. And higher layers will learn to combine those into even more complex shapes, such as the nostril area, or an eyebrow. Eventually, the higher-level, recognised shapes will be useful for the network in detecting an entire face. But this function between input and output is complex and non-linear, and it is for learning such problems that (deep) neural networks are particularly useful.

What is the Turing test? Do you think you could pass it?

Alan Turing (1912–1954) was a famous English mathematician, codebreaker and computer scientist. In 1950 he proposed a method (which became known as “The Turing Test”) for how to define whether a machine was intelligent. The idea was as follows: you have an interrogator interrogating a person and a computer, but the interrogator can’t see them. The interrogator knows only that one of these “entities” is called “X” and the other, “Y”, and can ask questions such as “Can Y please tell me whether Y plays chess?” The goal of the machine is to convince the interrogator that it is human, and that the human is the machine. If, at the end, the interrogator can’t tell which is which, then we would say that the machine can think.

Now that I’ve clarified this, I do hope you think you could pass the Turing test yourself!

What is the Chinese room experiment?

The Chinese Room Argument is a thought experiment derived by philosopher John Searle in 1980. The idea is: you have a person alone in a room and feed them Chinese characters written on paper and slipped under the door. The person inside the room doesn’t understand Chinese, but they can consult a computer program which tells them how to manipulate the input and to produce the required output. So they do this, and send those outputs back under the door. The person outside thus believes they are having a written conversation with a true Chinese speaker inside the room. But of course, they are not.

The point of this thought experiment is to show that the Turing Test is not an adequate test of intelligence, because we can program things that appear to have understanding, but they actually don’t. Another conclusion some people draw from the experiment is that it shows how minds are the result of biological processes, not computational ones, and thus the best we can ever do is simulate intelligence; we won’t be able to create it.

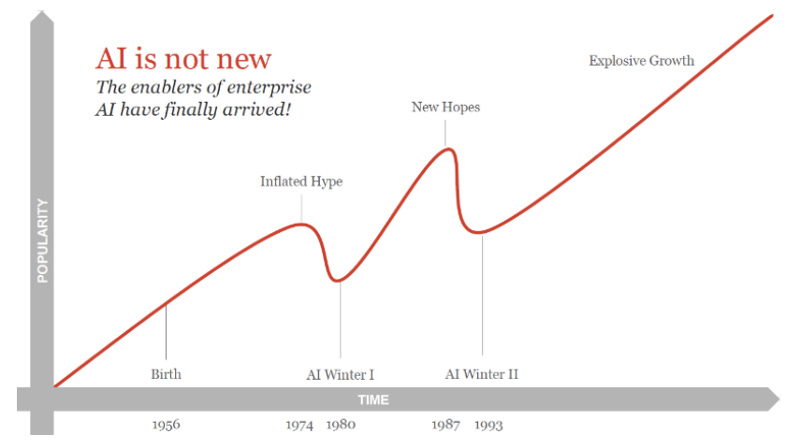

What is an AI winter and are we in danger of another one?

The AI winters of the past were periods of disillusionment in the field, when funding dried up and research slowed considerably. They were generally caused by too much hype and inflated expectations in what AI could do, which was in fact met with poor results and a lack of generalisability and scalability — in other words, of applicability — of AI technologies.

Are we in danger of another AI winter? I have heard convincing arguments from the world’s experts on both sides of this question. Certainly there is a lot of hype, and certainly in some domains we are investing immense amounts of computational power (which comes at a cost) and achieving only incrementally smaller improvements to the state of the art. So there appears to be a risk there, but as to whether another winter is coming, I don’t believe anyone is sure.

What is the difference between ‘weak’ and ‘strong’ AI?

Weak AI is narrow: it can only do one thing. Computers are good at weak AI. For example, we can train a machine learning model to perform one specific task, such as distinguishing between pictures of cats versus dogs, at human or near-human level.

Strong AI is more broad and general. Humans are excellent at this: we might never be able to crunch huge datasets and identify a specific pattern for solving a specific problem, like a neural network can, but we can do so many things: move, speak, understand the world around us with all our senses, and so much more. Much AI research is being invested into getting better at this, for example by pre-training models on one task and fine-tuning them on others, or by performing “few/one/zero-shot learning”, which is about getting models to learn a new task from limited examples. But humans are still the masters at this.

What is ‘the singularity’?

“The singularity” refers to an “‘intelligence explosion,” in which an AI will keep on improving itself, iteratively, until we end up with a super-intelligence far greater than our own.

Some people fear we might reach the singularity as we try to build Artificial General Intelligence (AGI): a very strong AI, capable of understanding or learning any human intellectual task as well as we can. Yet this possibility is fiercely debated, with some people believing it inevitable and others, impossible.

How does playing ‘floor is lava’ help advance machine learning research?

This is an example of another kind of learning, called reinforcement learning. The way it works is: the AI has some reward function it is programmed to try to maximise, like scoring points. You don’t tell it how to score points, you just put it in an environment and give it points for some actions, and take away points for other actions. So it learns from experience.

Simulating floor is lava is one example which has been used for this kind of learning: you would not explicitly tell the AI that the floor is bad and should not be trodden on, instead, you would simply deduct points if it stepped on the floor. Oh and by the way, when I talk about an AI stepping on things, I don’t mean a physical entity like a robot. I just mean some kind of simulation. An excellent example, featuring the game hide and seek, can be found on YouTube.

While this may all sound like pure fun and games, reinforcement learning has many practical uses. For example, both retailers and media platforms could use it to train their recommender systems, which recommend you products or content. Stock trading is another area of active research, as is dynamic pricing in commerce situations. Furthermore, reinforcement learning can help us to understand — and thus, try to build — intelligence. That’s because it draws many parallels to evolution, which, ultimately, led to our intelligence as humans.

How does Amazon’s Alexa and other smart devices understand what you want?

I actually wrote extensively on this topic in another post, “How Your Digital Personal Assistant Understands What You Want (And Gets it Done)”. So you can get your answer in the section “How Does Natural Language Understanding Work?”. I also discuss why language is so tricky to work with, what is a digital personal assistant, and what is Natural Language Processing, Understanding, and Generation.

By the way, if Natural Language Processing interests you but you are not a practitioner, and you speak German, then you may be interested in taking my LinkedIn Learning course “NLP Werkzeuge und Methoden.”

What is the trolley problem?

This is another famous thought experiment: Imagine there is an out of control trolley (that is, a tram carriage) heading down the train tracks towards five workers, who would all be killed by it. You are a bystander and have the choice to flick a switch and divert the trolley onto another track, where only one person is working. Thus, you would save five lives, but actively take one. Would you do it?

This question is highly relevant now, in AI ethics in general and in the domain of autonomous vehicles in particular. Questions abound about what would happen if an autonomous vehicle were faced with such a situation? And who would be liable if someone were injured or killed? The manufacturer? The software developers? Someone else?

A typical question would be something like: What if two children ran in front of an autonomous car, and it had to choose between killing them or swerving and killing the driver? Most people would choose the utilitarian perspective, which says take the action which costs the least lives. But most people also expect that if they were the driver, the car should protect them at all costs. That’s one of the reasons that discussions on this topic are so tricky.

What is algorithmic bias and why is it dangerous?

Algorithmic bias occurs when an algorithm’s outputs systematically and unfairly do a disservice to a certain group, such as discriminating based on gender or ethnicity. It’s usually unintentional, but often caused by poor choices in the development of the model, especially in the collection and preparation of the input data. That is, if the input data is biased, or simply does not represent certain groups, then the model’s outputs will be discriminatory.

Many examples of AI bias have received significant public attention in recent years, thanks to the efforts of whistleblowers and of organisations like the Algorithmic Justice League and Algorithm Watch. For example:

Austria’s AMS employment-worthiness prediction algorithm, which was built in order to determine how much welfare assistance was granted to different kinds of job seekers. The algorithm was found to discriminate against women, immigrants, and disabled people.

And, unfortunately, many more.

Will AI steal your job?

Like many of the questions I have posed here, this one has a mixed answer. If you have a hard, repetitive, perhaps physical job which doesn’t require a lot of human thought, then maybe your job will be automated. Truck driving is a good and well-known example. But for many jobs, any take over will probably take many years, and will actually enable you to execute other tasks and just avoid those awful ones. For example, if most bank queries are handled by a chatbot, then the more complex, interesting ones will get directed to a human: you.

If you have a job requiring facilities such as human thought, creativity, compassion, emotion, and so on, then no, AI is a long way off from taking your job. Plus, AI will create a lot of new jobs, many of which we can’t even imagine yet.

Will AI destroy the world, or save it?

We didn’t get a chance to cover this question in my interview, which is a shame as it is such an important and interesting one. And once again, it has many, contrasting answers.

Much fear-mongering is conducted around the topics of the singularity (see above) and the potential loss of jobs. There are often scary stories about AI, such as the one where Facebook shut down an experiment after its AIs “invented” their own language. But the reality behind such clickbait titles is often far more mundane, as Roman Kucera demonstrates with his excellent explanation of what really happened in that infamous case: The truth behind Facebook AI inventing a new language. I will admit that there are risks and unknowns. And when someone like Stephen Hawking warns us of the perils of AI, one has to take notice. But as I have stressed, we simply do not know; even the best researchers of the world are hotly debating this topic.

In the meantime, AI has the potential for a great deal of good. Many people believe AI will help us figure out ways to solve the biggest problems of ours and the next generation’s problems. For example, it can be used in medicine to make more accurate diagnoses, or even discover potential new drugs (as was used heavily during the COVID pandemic, for example). It has diverse applications in the domain of environmental protection. It may help us design better cities, with better public transport, logistics, and so on. We are essentially limited only by our imagination. Fortunately, this unique facet of human intelligence is one which we have in abundance.

Thanks for reading!

If this article helped you, please give it a little clap, so I know to produce more content like it. You can also follow me here on Medium, or on Twitter (where I post loads of interesting content on AI, tech, ethics, and more), or on LinkedIn (where I summarise the best of my Medium and twitter feed). If you’d like me to speak at your event, please contact me via my socials or the contact form here.