Resources Roundup: Algorithmic bias, testing your preconceptions, and how to help build gender equality

A not-quite-buzzfeed quiz to check your biases; an intro to critical algorithm studies; and ideas for enabling ethical developers.

About once a month, I compile a handful of the most interesting and useful things I’ve shared via Twitter on a specific theme into a short article about that topic. March is Women’s History Month, so this twitter Resources Roundup focuses on the relationship between technology — particularly that powered by AI and automated algorithms—and women. Spoiler alert, there are plenty of problems. But, there are also ways to help.

The Situation

Let’s get the bad news out of the way first. You’re surely aware of the problem of algorithmic discrimination against women and minorities. I kicked off this post with one example, and here’s another:

The Guardian recently investigated AI-driven content moderation and discovered that female images were often oversexualized, leading to shadow bans and potential content suppression. For example, oiled up male athletes in tiny shorts were no problem, but female joggers in sports bras were marked as ‘highly sexual’, and seem to have been suppressed in platforms like LinkedIn (view/share my original tweet about this).

This can be unfair to the content creators, e.g. female-led businesses creating products or content for women might get less organic reach than similar businesses aimed at men, which can have knock-on effects on wealth creation, which can effect financial inequality, and so on. The cycle perpetuates itself.

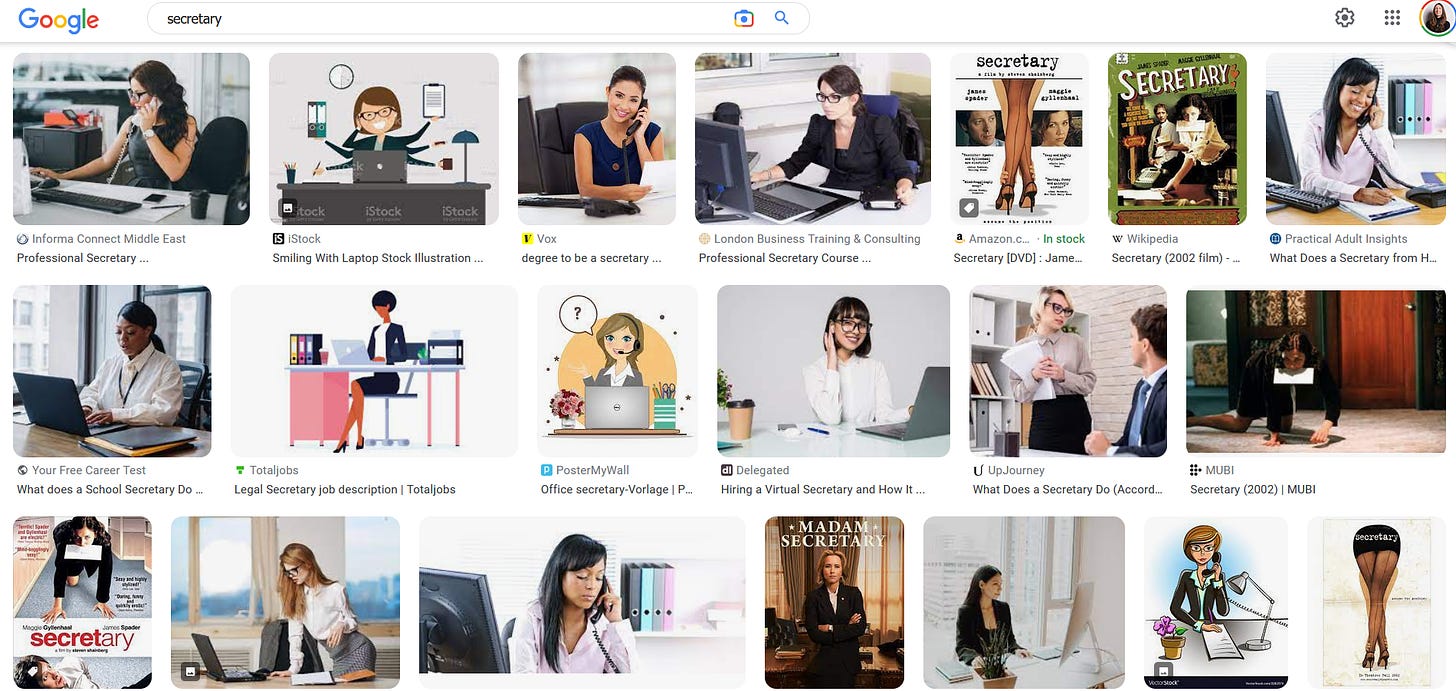

But what disturbs me even more about these two examples is that they both relate to the way algorithms influence our collective imagery of different genders in society. And worse, these concepts perpetuate themselves. Don’t believe me? Try a Google image search for ‘CEO’, and then another for ‘secretary’ and what do you get? Mainly men in the first case, and about 99% women in the second. And what do you know, many of those women are pictured in short skirts, stockings and heels, multiple are bent suggestively over the table or even—gag—pictured on their hands and knees, and there are two stock photos with these lovely captions: ‘boss harassing his secretary’ and ‘charming secretary talking to her boss.’ I was reluctant to include those last two links, but found them so shocking that I felt I needed to prove they were real.

”So what?” You might say. “So most people visualise CEOs as men and secretaries as women—I don’t!” But are you sure? Before you get defensive, don’t forget that an association bias doesn’t mean you are setting out to disadvantage a particular group. We all have association biases, and that’s not a crime. It’s the result of a lifetime of exposure to stereotypes like those displayed in Google images.

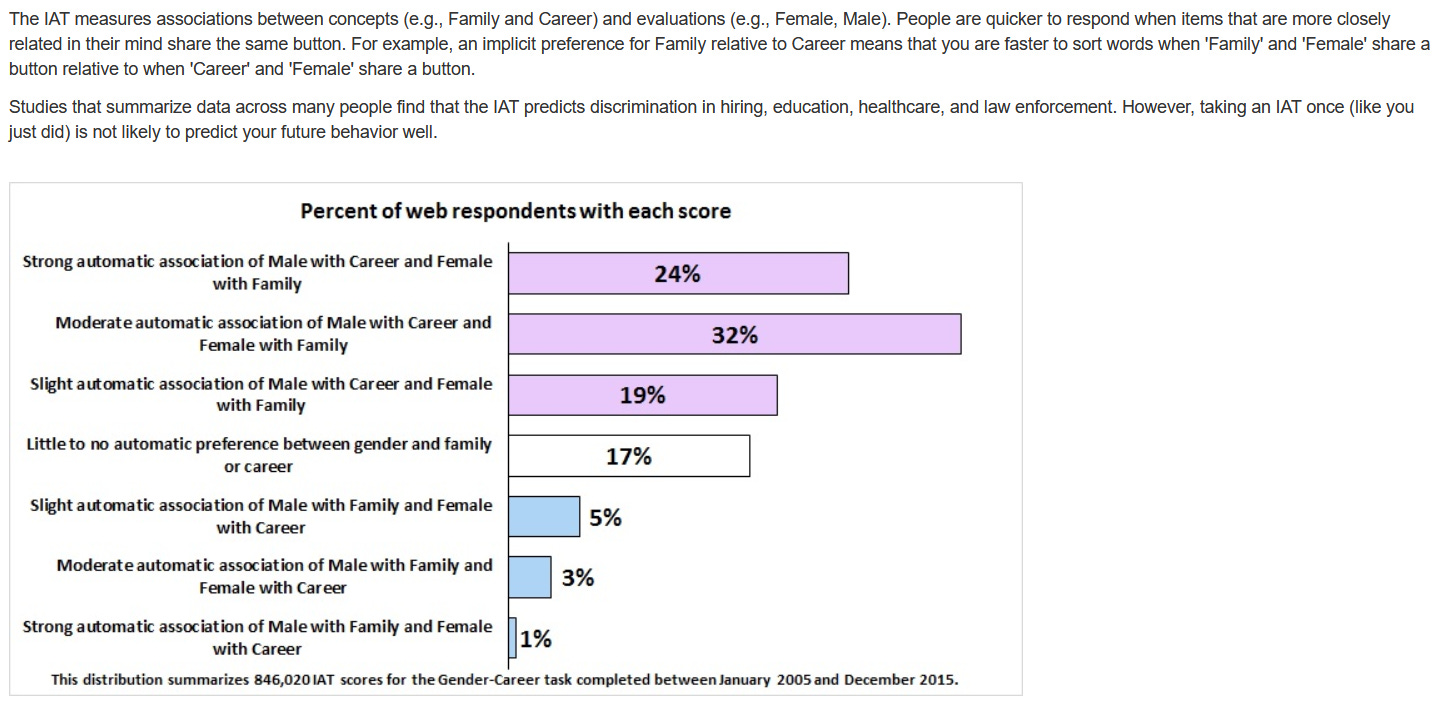

The Harvard Implicit Bias Test can help you uncover your own biases regarding gender, race, sexuality, age, and more. Try it, and I’m sure you’ll shock yourself: In the Gender-Career test I scored ‘strong automatic association of Male with Career and Female with Family’, and that’s despite the fact that I’m part of three organisations supporting women in their career goals, I’ve run accelerator programs for female entrepreneurs, and am constantly trying to do my part to shift these biases (like writing this post). Scary, right?

“OK, so I’ve got a few stereotypes in my head,” you might say (having completed one of those tests). “What’s the big deal?” The problem is that our preconceptions about people (and anything really; for example, items produced by certain brands or certain countries) can affect our interactions with them, without us even knowing it. They cause us to make assumptions and judgements about people before we’ve even met them, and to treat them in ways that may put them at a disadvantage, even if we didn’t intend any discrimination.

Let’s get back to tech, and specifically, digital assistants. Many companies claim that people prefer female voices for these applications, and often cite their own research confirming this. Yet, thirteen of the top 20 audiobook narrators are male. Are we unconsciously perpetuating gender inequality by designing our AI assistants to have female voices and appearances? (View/share original tweet).

Winding backwards from this question, why does the contradiction exist in the first place? Is it because our subconscious, implicit biases, which place women in family and caregiver roles, or have them working as secretaries more often than as business leaders, lead us to feel that a female assistant is more natural? Are we less comfortable delegating tasks to a male digital assistant, because we’re so un-used to seeing males in secretarial and similar roles? It would take a far longer blog post (and a far cleverer writer) to give these questions the coverage they deserve. But at a bare minimum, I believe we should all work to uncover our own cognitive biases, so that we can take steps to positively counter them.

What We Can Do About It

As a next step, those of us who produce, promote or profit from AI- and algorithm-powered tech (a.k.a. me and most of my audience), should be aware of how this technology may perpetuate inequalities. Only by really understanding the problem and how we might be contributing to it, can we make real, concrete actions to reduce negative impacts and increase positive ones.

One way to start is through critical algorithm studies, which is a combined effort from fields like sociology, science, media and technology studies to investigate algorithms critically and with specific focus on their potentials for bias, discrimination and any other potential for social harms. Tarleton Gillespie and Nick Seaver compiled a fantastic critical algorithm studies reading list which can get you started (original tweet). It includes this book which has been on my to-read list for a while now: Safiya Umoja Noble’s "Algorithms of Oppression: Race, Gender and Power in the Digital Age," which uses an analysis of textual and media searches as well as extensive research on paid online advertising to demonstrate how search engines reinforce racism and sexism.

Speaking of books that take a critical perspective on AI, tech, and ethics, here are a few more fantastic women that can help:

“The Equality Machine: Harnessing Digital Technology for a Brighter, More Inclusive Future,” by Orly Lobel

“Invisible Women: Data Bias in a World Designed for Men,” by Caroline Criado Perez

“More than a Glitch: Confronting Race, Gender, and Ability Bias in Tech,” by Meredith Broussard

(original tweet).

I’ll admit, translating a critical understanding of algorithm risk into practice won’t necessarily be easy. For example, in The Ethical Agency of AI Developers, large-scale developer interviews revealed significant gaps between how developers feel about implementing ethical technology, and their agency to actually do so. It also shows that developers often do not realise just how many ethical decisions they make, and provides some great recommendations for how the situation can be improved (original tweet). But I believe it is absolutely worth it, and what better day to start than today, International Women’s Day?

Thanks for reading the third edition of my Twitter resources roundup. I’m experimenting with the format and would love to know what you think of it. In edition one, focussing on AI ethics, I was pretty light with the commentary. In episode two, which was about bringing a machine learning project into production, I added a bit more, and included a deep dive on one section. This time, I’ve gone for more of an opinion piece, and I’ve added links to the original tweets for you to view/share.

If I can do better, please let me know via Twitter! And if you like where I’m heading, please subscribe and share. Thanks!